Introduction

One of the core responsibilities of risk professionals is to provide actionable insights that help decision-makers make informed strategic choices. Traditionally, this has been done through qualitative assessments like heatmaps, color codes, or high-medium-low ratings. While useful for gauging likelihood, severity, and impact, these approaches are often subjective, prone to bias, and sometimes vague—raising questions such as how to prioritize risks that are rated the same.

In today’s interconnected and data-driven business environment, organizations need more than qualitative analysis. Quantitative risk assessments address the limitations of subjectivity by relying on data, historical patterns, and probability models to estimate outcomes. Financial institutions have long used this approach for credit, market, and liquidity risks—so why not apply it to non-financial risks like compliance failures, operational disruptions, or technology challenges? This eBook explores both qualitative and quantitative methodologies, with a focus on quantifying non-financial risks.

What is Risk Assessment?

Risk assessment or risk analysis is the process of analyzing and examining potential risk events that may adversely impact an organization and comparing the risk exposures with defined tolerance levels.

Performing risk assessments is one of the most important steps in the risk management process. Once risks faced by an organization have been identified, assessment and analysis are critical to unlocking deeper insights into the overall risk posture, understanding the factors that can have a negative impact, and determining proactive steps to mitigate and minimize them.

Risk Assessment Methodologies

There are two main types of risk assessment methodologies: qualitative risk assessment and quantitative risk assessment.

Qualitative Risk Assessment

Qualitative risk assessment is the process of determining the likelihood of a risk occurring, the impact it would have if the risk event occurred, and its severity.

The process involves recording the results in a risk assessment matrix, which can help risk professionals to quickly identify the top risks – those falling in the highest likelihood and impact categories.

Advantages :

- The methodology is simple and does not require training, ensuring quick adoption and move towards risk program maturity.

- It helps to identify top risks in a quick and cost-effective manner.

- It helps to quickly prioritize risks.

- It is perfect for companies that are starting their risk management process.

Disadvantages :

- It greatly depends on the knowledge and expertise of the assessor.

- It could be influenced by the assessor’s bias and perception.

- The analysis becomes ambiguous when multiple risks fall into the same category.

- It is not possible to perform cost benefit analysis and its subjective nature makes it difficult to accurately evaluate the effectiveness of controls.

Quantitative Risk Assessment

According to the International Risk Management Institute (IRMI), risk quantification is “forecasting of loss frequency and severity to make risk financing decisions. Dependable estimates of the likelihood and dollar amount of loss-causing events allow an organization to take appropriate steps now and in the future to minimize their financial impact.”

In simple words, risk quantification is associating a monetary value to risk. For example, while performing a risk assessment, an assessor calculates the annual loss expectancy (the potential loss due to risk in a year) of $1 million. This quantitative value brings clarity to risk professionals as to how much could be the loss if a risk becomes an event.

Advantages :

- It helps to accurately understand high-risk areas and risk exposure in financial terms.

- It provides realistic and actionable insights by presenting a range of outcomes compared to a single value.

- It enables to easily prioritize various risks and related business decisions.

- Associating a monetary value to risk enables CROs to effectively communicate risk exposure with the top management and board.

Disadvantages :

- The methodology is quite complex and requires advanced tools and experts.

- The quantitative analysis needs to be backed by a qualitative explanation else it could be misinterpreted.

- It is highly dependent on the availability of reliable data.

- It depends on the maturity of the risk function and might not be suitable for organizations of all sizes.

Qualitative vs Quantitative Risk Assessment: What's The Difference?

Quantitative risk assessment relies on data, metrics, and statistical models to evaluate risks, offering measurable results such as financial impact or probability percentages. On the other hand, qualitative risk assessment depends on expert opinions, prior experience, and subjective judgment to classify risks into categories like high, medium, or low based on their perceived likelihood and potential consequences.

Qualitative risk assesment is the process of determining the likelihood of a risk occuring, it is quick but subjective. Quantitative risk analysis differs in its approach and is objective and more detailed.

Qualitative risk assessment is the process of determining the likelihood of a risk occurring, the impact it would have, and its severity, while quantitative risk assessment relies on pre-existing numbers and statistics that are verified to measure probabilities and the potential impact of specific risks.

Comparison: Quantitative vs. Qualitative Risk Assessment

How to Quantify Non-Financial Risk (NFR)

What gets measured gets managed. For a comprehensive risk management program, it is critical to effectively manage non-financial risks as well. Quantifying NFR helps organizations to better evaluate if their risk exposure is aligned with their risk appetite, within their tolerance level, whether they need to invest more to improve controls, how much investment is worth it, and more

Value at Risk (VaR) is a way to quantify the risk of potential losses, i.e., the expected loss from risk exposure. Factor Analysis of Information Risk (FAIRTM) is one of the most widely used VaR models for cybersecurity and operational risks.

FAIRTM In the words of the FAIR Institute, “FAIR provides a model for understanding, analyzing and quantifying cyber risk and operational risk in financial terms.”

The model is based on the concept that risk is uncertain and therefore the focus should not be on what is possible but on the probability that a risk event will occur and the loss exposure. By enabling assessors to express the factors contributing to a risk in quantitative terms, such as numbers, percentages, monetary values, etc., it helps estimate the probable frequency and magnitude of loss. It provides a model to measure and analyze risk via complex risk scenarios.

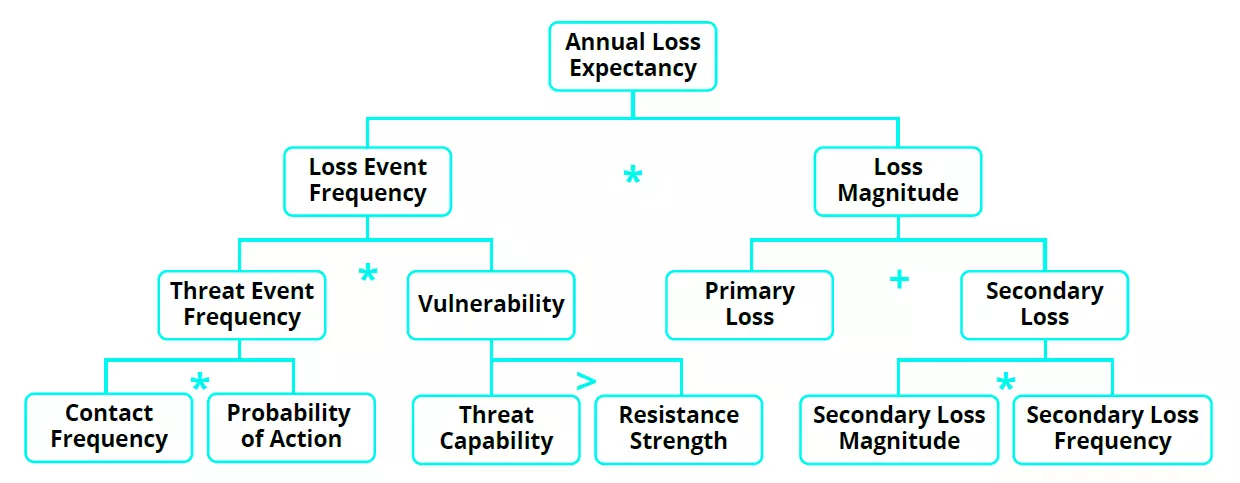

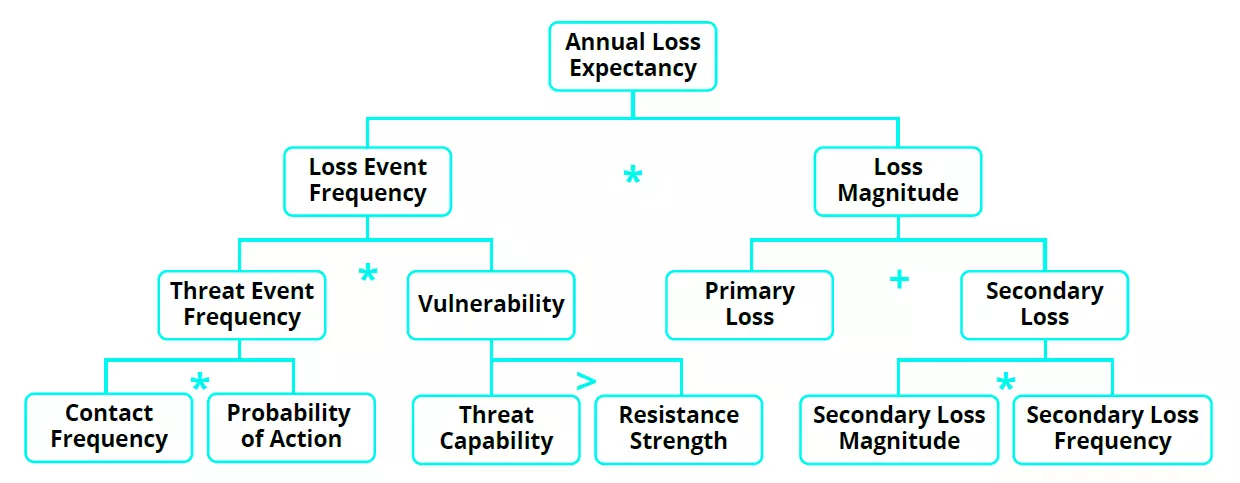

FAIR helps calculate total loss exposure, loss event frequency, loss magnitude, threat event frequency, susceptibility, and primary and secondary loss.

Annual Loss Expectancy Annual Loss Expectancy (ALE) is determined from Single Loss Expectancy (SLE) which is nothing but the loss that could result from a single risk event. For example, consider the risk of fire hazards. If the organizational infrastructure, including office building, furniture, etc., is valued at $100,000 and a fire outbreak destroys 75% of it, then the monetary loss to the organization is $75,000. So, in this example, SLE is $75,000.

Once we know the SLE, then ALE can be calculated by multiplying the frequency of a risk event by the magnitude of loss. For example, consider a scenario in which a risk event can occur 5 times in a year and an organization would lose $1,000 in each event, then ALE will be $5,000.

In another scenario, suppose a single risk event can result in a loss of $100,000. However, if the event occurs only once in 5 years, then the ALE would be $20,000.

Here’s a quick look at these terms (as defined by the FAIR Institute):

Loss Event Frequency The probable frequency that a threat action will result in loss within a given timeframe.

Threat Event Frequency The probable frequency that a threat agent will act against an asset within a given timeframe.

Loss Magnitude The probable magnitude of primary and secondary loss resulting from an event.

- Primary Loss Magnitude is the loss incurred from the loss event itself, that is, the financial impact of a fire breakout. It also includes activities that the primary stakeholder chooses to do in the wake of the loss event, such as investigating the incident or replacing a damaged server.

- Secondary Loss Magnitude is the loss incurred from the reactions of outside parties (or “secondary stakeholders”) to the loss event. For example, when the personal information of employees is exposed in a data breach, or a fine or penalty is levied by a regulator due to an operational failure or inefficient control.

Vulnerability

The probability of a threat event becoming a loss event. Or, in other words, the probability that an asset will be unable to resist a threat agent.

Threat Capability The probable level of force (as embodied by the time, resources, and technological capability) that a threat agent is capable of applying against an asset. Or, in other words, how much damage can be caused to an asset by a threat.

Resistance Strength The strength of a control compared to the threat capability. Or, in other words, the strength of a control to protect an asset from a threat.

Monte Carlo Simulation

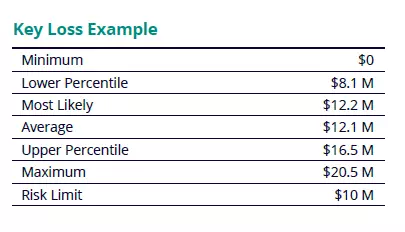

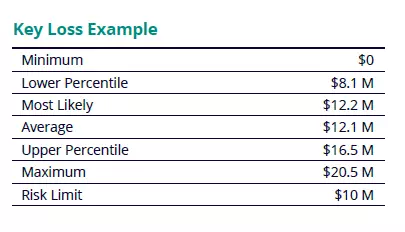

Monte Carlo simulation is one of the widely used methods for running a FAIR analysis. The modeling technique involves simulating a risk event, such as supply chain disruption, ransomware attacks, etc., multiple times and predicting the financial losses that could result from each scenario. The process generates a range of possible outcomes of any risk event along with their relative probabilities.

To simplify it further, the simulation helps run several what-if analyses by assigning multiple values to a variable, which would produce multiple results. It then computes the average of the results to arrive at an estimate. Ultimately, the technique provides a full range of potential outcomes, how likely the outcomes can occur, what factors are impacting the most, and by how much.

For example, consider the risk of natural calamity. Monte Carlo simulations can provide risk teams with a range of outcomes that are possible instead of single point estimates. With the probability distribution table, organizations would be able to quickly see their risk exposure at multiple levels and compare it with their risk limit for making better-informed decisions.

The Best Approach

From a practical viewpoint, the decision to perform a qualitative or quantitative risk assessment depends on what the risk assessor is trying to assess and what they expect to learn. For example, let’s consider the risk of fire hazards faced by an organization. An initial risk assessment would involve survey questions such as:

- Is the office premise located near a transformer or combustible sources (heating, welding, etc.)?

- Are there any electrical issues or spills in the office building?

- How many fire incidents have occurred in the past?

- What is the probability of this risk event?

- Have any lives been lost to such incidents?

Most of these questions require a yes/no response and greatly rely on the expertise and knowledge of the assessor. Though qualitative risk assessments are subjective in nature and can be influenced by an assessor’s perception and bias, they are important to understand the severity and likelihood of any risk event. At the same time, it is also important to note that while risk quantification is important, it is highly dependent on the availability of reliable data and the scale and maturity level of risk function. For truly understanding and assessing risks, organizations must use both qualitative and quantitative risk assessment methodologies.

"The deepest insights come from the widest perspectives. For true risk assessment, perform both qualitative and quantitative risk assessments to gain real visibility into the overall organizational and cyber risk posture. You may have heard it called a 360-degree view of risk…(Read More)"

- Patricia McParland, AVP, Product Marketing, MetricStream

Modernize Your Risk Assessment Program with Metricstream

MetricStream enables organizations to effectively plan, schedule, manage, and perform risk and control assessments. It equips risk professionals to evaluate inherent and residual risks both quantitatively and qualitatively using configurable assessment methodologies.

With the Danube release, MetricStream brought advanced risk quantification capabilities to MetricStream Enterprise Risk Management and Operational Risk Management products. Risk teams can now express loss exposure in monetary terms. Associating an external standard and financial value makes it simpler for all stakeholders to quickly grasp and accurately understand the relative importance of each risk and make better-informed decisions. Users can build any kind of custom model, use various factors and variables, and capture values for factors (e.g., threat event frequency) that are represented in a simple, parent-child hierarchal format. A wide range of factors (e.g., Min, Max, Most Likely, and Confidence) are already available to improve the accuracy of quantification. Earlier, MetricStream had rolled out risk quantification capabilities to the IT & Cyber Risk Management product.

MetricStream Risk Assessment:

- Enables simple assessments by rating a risk, and advanced assessments using multiple factors and risk scoring thereby promoting a positive risk culture

- Helps in presenting risk exposure in monetary values and quantitative terms, enabling risk-informed decisions

- Supports configurable risk formulas to be developed by users to suit customer risk calculation methodologies

- Helps assess overall control environment by evaluating controls based on multiple factors for design and operational efficiencies

- Supports aggregation risk scores using the weighted average method where weights can be given to multiple dimensions, including organization, objective, product, process, assessable item, or risk hierarchy for improved and accurate risk visibility

FAQ

What is the difference between quantitative and qualitative risk assessment?

Quantitative risk assessment expresses risk in numerical terms, such as probability values, financial impact, or statistical models. Qualitative risk assessment uses descriptive ratings like high, medium, or low to evaluate risks based on judgment, experience, and contextual understanding.

What is an example of a qualitative risk assessment?

A team reviews potential project risks and labels each one as low, medium, or high based on likelihood and severity. These labels are used to prioritize actions without assigning specific numbers or financial values.

What is an example of a quantitative risk assessment?

A company estimates that a ransomware attack could result in a ₹2 crore loss with a 30% probability of occurring in a year. This numerical analysis helps calculate expected loss and guide investment decisions.

What is the primary purpose of using both quantitative and qualitative risk assessments?

Using both methods gives a fuller view of risk. Qualitative assessment brings context, expert insight, and speed, while quantitative assessment adds precision and financial clarity. Together, they support more balanced and informed decision-making.

What are non-financial risks?

UNon-financial risks are risks that can harm an organization without directly involving financial market losses. These include operational failures, regulatory violations, cybersecurity incidents, reputational damage, and conduct related issues.

How do you quantify non-financial risks?

Non-financial risks can be quantified using historical loss data, scenario analysis, risk scoring models, key risk indicators, and statistical methods that estimate potential operational or reputational impacts.

What are the key steps in a non-financial risk assessment lifecycle?

The lifecycle typically includes identifying risks, assessing their likelihood and impact, implementing controls to mitigate exposure, monitoring risk indicators, and regularly reviewing and updating assessments.

How do operational and strategic risks differ from non-financial risk?

Operational and strategic risks are categories within the broader group of non-financial risks. Operational risk relates to failures in processes, people, or systems, while strategic risk arises from business decisions, market changes, or competitive pressures.

Which frameworks support non-financial risk assessment?

Several frameworks support non-financial risk management, including COSO ERM, ISO 31000, Basel operational risk guidelines, and NIST frameworks for technology and cybersecurity risk.

How do qualitative and quantitative methods work together in risk management?

Many organizations use qualitative methods to identify and prioritize risks, then apply quantitative techniques to analyze high impact risks in greater detail. This combined approach provides both strategic perspective and measurable insights for decision making.

One of the core responsibilities of risk professionals is to provide actionable insights that help decision-makers make informed strategic choices. Traditionally, this has been done through qualitative assessments like heatmaps, color codes, or high-medium-low ratings. While useful for gauging likelihood, severity, and impact, these approaches are often subjective, prone to bias, and sometimes vague—raising questions such as how to prioritize risks that are rated the same.

In today’s interconnected and data-driven business environment, organizations need more than qualitative analysis. Quantitative risk assessments address the limitations of subjectivity by relying on data, historical patterns, and probability models to estimate outcomes. Financial institutions have long used this approach for credit, market, and liquidity risks—so why not apply it to non-financial risks like compliance failures, operational disruptions, or technology challenges? This eBook explores both qualitative and quantitative methodologies, with a focus on quantifying non-financial risks.

Risk assessment or risk analysis is the process of analyzing and examining potential risk events that may adversely impact an organization and comparing the risk exposures with defined tolerance levels.

Performing risk assessments is one of the most important steps in the risk management process. Once risks faced by an organization have been identified, assessment and analysis are critical to unlocking deeper insights into the overall risk posture, understanding the factors that can have a negative impact, and determining proactive steps to mitigate and minimize them.

There are two main types of risk assessment methodologies: qualitative risk assessment and quantitative risk assessment.

Qualitative Risk Assessment

Qualitative risk assessment is the process of determining the likelihood of a risk occurring, the impact it would have if the risk event occurred, and its severity.

The process involves recording the results in a risk assessment matrix, which can help risk professionals to quickly identify the top risks – those falling in the highest likelihood and impact categories.

Advantages :

- The methodology is simple and does not require training, ensuring quick adoption and move towards risk program maturity.

- It helps to identify top risks in a quick and cost-effective manner.

- It helps to quickly prioritize risks.

- It is perfect for companies that are starting their risk management process.

Disadvantages :

- It greatly depends on the knowledge and expertise of the assessor.

- It could be influenced by the assessor’s bias and perception.

- The analysis becomes ambiguous when multiple risks fall into the same category.

- It is not possible to perform cost benefit analysis and its subjective nature makes it difficult to accurately evaluate the effectiveness of controls.

Quantitative Risk Assessment

According to the International Risk Management Institute (IRMI), risk quantification is “forecasting of loss frequency and severity to make risk financing decisions. Dependable estimates of the likelihood and dollar amount of loss-causing events allow an organization to take appropriate steps now and in the future to minimize their financial impact.”

In simple words, risk quantification is associating a monetary value to risk. For example, while performing a risk assessment, an assessor calculates the annual loss expectancy (the potential loss due to risk in a year) of $1 million. This quantitative value brings clarity to risk professionals as to how much could be the loss if a risk becomes an event.

Advantages :

- It helps to accurately understand high-risk areas and risk exposure in financial terms.

- It provides realistic and actionable insights by presenting a range of outcomes compared to a single value.

- It enables to easily prioritize various risks and related business decisions.

- Associating a monetary value to risk enables CROs to effectively communicate risk exposure with the top management and board.

Disadvantages :

- The methodology is quite complex and requires advanced tools and experts.

- The quantitative analysis needs to be backed by a qualitative explanation else it could be misinterpreted.

- It is highly dependent on the availability of reliable data.

- It depends on the maturity of the risk function and might not be suitable for organizations of all sizes.

Qualitative vs Quantitative Risk Assessment: What's The Difference?

Quantitative risk assessment relies on data, metrics, and statistical models to evaluate risks, offering measurable results such as financial impact or probability percentages. On the other hand, qualitative risk assessment depends on expert opinions, prior experience, and subjective judgment to classify risks into categories like high, medium, or low based on their perceived likelihood and potential consequences.

Qualitative risk assesment is the process of determining the likelihood of a risk occuring, it is quick but subjective. Quantitative risk analysis differs in its approach and is objective and more detailed.

Qualitative risk assessment is the process of determining the likelihood of a risk occurring, the impact it would have, and its severity, while quantitative risk assessment relies on pre-existing numbers and statistics that are verified to measure probabilities and the potential impact of specific risks.

Comparison: Quantitative vs. Qualitative Risk Assessment

What gets measured gets managed. For a comprehensive risk management program, it is critical to effectively manage non-financial risks as well. Quantifying NFR helps organizations to better evaluate if their risk exposure is aligned with their risk appetite, within their tolerance level, whether they need to invest more to improve controls, how much investment is worth it, and more

Value at Risk (VaR) is a way to quantify the risk of potential losses, i.e., the expected loss from risk exposure. Factor Analysis of Information Risk (FAIRTM) is one of the most widely used VaR models for cybersecurity and operational risks.

FAIRTM In the words of the FAIR Institute, “FAIR provides a model for understanding, analyzing and quantifying cyber risk and operational risk in financial terms.”

The model is based on the concept that risk is uncertain and therefore the focus should not be on what is possible but on the probability that a risk event will occur and the loss exposure. By enabling assessors to express the factors contributing to a risk in quantitative terms, such as numbers, percentages, monetary values, etc., it helps estimate the probable frequency and magnitude of loss. It provides a model to measure and analyze risk via complex risk scenarios.

FAIR helps calculate total loss exposure, loss event frequency, loss magnitude, threat event frequency, susceptibility, and primary and secondary loss.

Annual Loss Expectancy Annual Loss Expectancy (ALE) is determined from Single Loss Expectancy (SLE) which is nothing but the loss that could result from a single risk event. For example, consider the risk of fire hazards. If the organizational infrastructure, including office building, furniture, etc., is valued at $100,000 and a fire outbreak destroys 75% of it, then the monetary loss to the organization is $75,000. So, in this example, SLE is $75,000.

Once we know the SLE, then ALE can be calculated by multiplying the frequency of a risk event by the magnitude of loss. For example, consider a scenario in which a risk event can occur 5 times in a year and an organization would lose $1,000 in each event, then ALE will be $5,000.

In another scenario, suppose a single risk event can result in a loss of $100,000. However, if the event occurs only once in 5 years, then the ALE would be $20,000.

Here’s a quick look at these terms (as defined by the FAIR Institute):

Loss Event Frequency The probable frequency that a threat action will result in loss within a given timeframe.

Threat Event Frequency The probable frequency that a threat agent will act against an asset within a given timeframe.

Loss Magnitude The probable magnitude of primary and secondary loss resulting from an event.

- Primary Loss Magnitude is the loss incurred from the loss event itself, that is, the financial impact of a fire breakout. It also includes activities that the primary stakeholder chooses to do in the wake of the loss event, such as investigating the incident or replacing a damaged server.

- Secondary Loss Magnitude is the loss incurred from the reactions of outside parties (or “secondary stakeholders”) to the loss event. For example, when the personal information of employees is exposed in a data breach, or a fine or penalty is levied by a regulator due to an operational failure or inefficient control.

Vulnerability

The probability of a threat event becoming a loss event. Or, in other words, the probability that an asset will be unable to resist a threat agent.

Threat Capability The probable level of force (as embodied by the time, resources, and technological capability) that a threat agent is capable of applying against an asset. Or, in other words, how much damage can be caused to an asset by a threat.

Resistance Strength The strength of a control compared to the threat capability. Or, in other words, the strength of a control to protect an asset from a threat.

Monte Carlo Simulation

Monte Carlo simulation is one of the widely used methods for running a FAIR analysis. The modeling technique involves simulating a risk event, such as supply chain disruption, ransomware attacks, etc., multiple times and predicting the financial losses that could result from each scenario. The process generates a range of possible outcomes of any risk event along with their relative probabilities.

To simplify it further, the simulation helps run several what-if analyses by assigning multiple values to a variable, which would produce multiple results. It then computes the average of the results to arrive at an estimate. Ultimately, the technique provides a full range of potential outcomes, how likely the outcomes can occur, what factors are impacting the most, and by how much.

For example, consider the risk of natural calamity. Monte Carlo simulations can provide risk teams with a range of outcomes that are possible instead of single point estimates. With the probability distribution table, organizations would be able to quickly see their risk exposure at multiple levels and compare it with their risk limit for making better-informed decisions.

From a practical viewpoint, the decision to perform a qualitative or quantitative risk assessment depends on what the risk assessor is trying to assess and what they expect to learn. For example, let’s consider the risk of fire hazards faced by an organization. An initial risk assessment would involve survey questions such as:

- Is the office premise located near a transformer or combustible sources (heating, welding, etc.)?

- Are there any electrical issues or spills in the office building?

- How many fire incidents have occurred in the past?

- What is the probability of this risk event?

- Have any lives been lost to such incidents?

Most of these questions require a yes/no response and greatly rely on the expertise and knowledge of the assessor. Though qualitative risk assessments are subjective in nature and can be influenced by an assessor’s perception and bias, they are important to understand the severity and likelihood of any risk event. At the same time, it is also important to note that while risk quantification is important, it is highly dependent on the availability of reliable data and the scale and maturity level of risk function. For truly understanding and assessing risks, organizations must use both qualitative and quantitative risk assessment methodologies.

"The deepest insights come from the widest perspectives. For true risk assessment, perform both qualitative and quantitative risk assessments to gain real visibility into the overall organizational and cyber risk posture. You may have heard it called a 360-degree view of risk…(Read More)"

- Patricia McParland, AVP, Product Marketing, MetricStream

MetricStream enables organizations to effectively plan, schedule, manage, and perform risk and control assessments. It equips risk professionals to evaluate inherent and residual risks both quantitatively and qualitatively using configurable assessment methodologies.

With the Danube release, MetricStream brought advanced risk quantification capabilities to MetricStream Enterprise Risk Management and Operational Risk Management products. Risk teams can now express loss exposure in monetary terms. Associating an external standard and financial value makes it simpler for all stakeholders to quickly grasp and accurately understand the relative importance of each risk and make better-informed decisions. Users can build any kind of custom model, use various factors and variables, and capture values for factors (e.g., threat event frequency) that are represented in a simple, parent-child hierarchal format. A wide range of factors (e.g., Min, Max, Most Likely, and Confidence) are already available to improve the accuracy of quantification. Earlier, MetricStream had rolled out risk quantification capabilities to the IT & Cyber Risk Management product.

MetricStream Risk Assessment:

- Enables simple assessments by rating a risk, and advanced assessments using multiple factors and risk scoring thereby promoting a positive risk culture

- Helps in presenting risk exposure in monetary values and quantitative terms, enabling risk-informed decisions

- Supports configurable risk formulas to be developed by users to suit customer risk calculation methodologies

- Helps assess overall control environment by evaluating controls based on multiple factors for design and operational efficiencies

- Supports aggregation risk scores using the weighted average method where weights can be given to multiple dimensions, including organization, objective, product, process, assessable item, or risk hierarchy for improved and accurate risk visibility

What is the difference between quantitative and qualitative risk assessment?

Quantitative risk assessment expresses risk in numerical terms, such as probability values, financial impact, or statistical models. Qualitative risk assessment uses descriptive ratings like high, medium, or low to evaluate risks based on judgment, experience, and contextual understanding.

What is an example of a qualitative risk assessment?

A team reviews potential project risks and labels each one as low, medium, or high based on likelihood and severity. These labels are used to prioritize actions without assigning specific numbers or financial values.

What is an example of a quantitative risk assessment?

A company estimates that a ransomware attack could result in a ₹2 crore loss with a 30% probability of occurring in a year. This numerical analysis helps calculate expected loss and guide investment decisions.

What is the primary purpose of using both quantitative and qualitative risk assessments?

Using both methods gives a fuller view of risk. Qualitative assessment brings context, expert insight, and speed, while quantitative assessment adds precision and financial clarity. Together, they support more balanced and informed decision-making.

What are non-financial risks?

UNon-financial risks are risks that can harm an organization without directly involving financial market losses. These include operational failures, regulatory violations, cybersecurity incidents, reputational damage, and conduct related issues.

How do you quantify non-financial risks?

Non-financial risks can be quantified using historical loss data, scenario analysis, risk scoring models, key risk indicators, and statistical methods that estimate potential operational or reputational impacts.

What are the key steps in a non-financial risk assessment lifecycle?

The lifecycle typically includes identifying risks, assessing their likelihood and impact, implementing controls to mitigate exposure, monitoring risk indicators, and regularly reviewing and updating assessments.

How do operational and strategic risks differ from non-financial risk?

Operational and strategic risks are categories within the broader group of non-financial risks. Operational risk relates to failures in processes, people, or systems, while strategic risk arises from business decisions, market changes, or competitive pressures.

Which frameworks support non-financial risk assessment?

Several frameworks support non-financial risk management, including COSO ERM, ISO 31000, Basel operational risk guidelines, and NIST frameworks for technology and cybersecurity risk.

How do qualitative and quantitative methods work together in risk management?

Many organizations use qualitative methods to identify and prioritize risks, then apply quantitative techniques to analyze high impact risks in greater detail. This combined approach provides both strategic perspective and measurable insights for decision making.